Last Updated: March 2026

Ready to build something with the Femto Bolt? Here's what I've learned from actually getting code running. This guide covers the practical parts—setup, SDK, and getting to working code as fast as possible.

The good news: if you've used Azure Kinect, you're 90% there already.

Getting Started

First things first: you'll need a few things in place.

What You Need

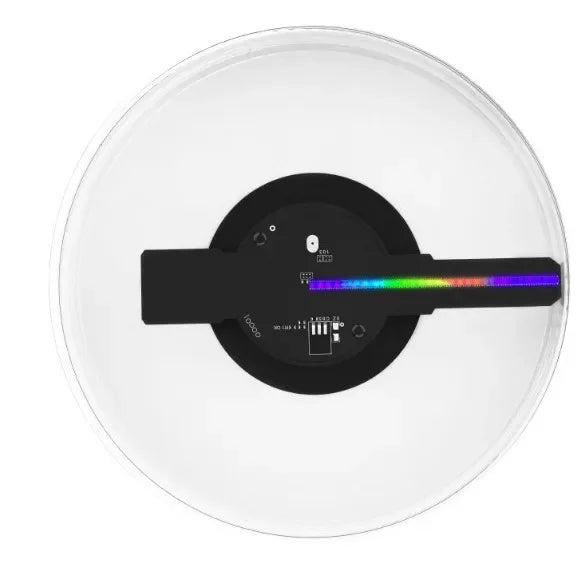

- Femto Bolt camera

- USB-C cable (included)

- Computer with USB 3.0

- Ubuntu 20.04/22.04 or Windows 10/11

Install the SDK

Orbbec provides an installer for Windows. For Linux, it's compile-from-source—but honestly, it's straightforward if you've built anything before.

# Ubuntu

sudo apt install libusb-1.0-0-dev libudev-dev pkg-config cmake

# Download SDK from Orbbec website

tar -xf OrbbecSDK_linux_x64_2.x.x.tar.gz

cd OrbbecSDK

mkdir build && cd build

cmake ..

make -j4

sudo make install

Your First Program

Let's get something on screen. Python is easiest for starting out.

Python Example

# Install the SDK Python wrapper

pip install pyorbbec

# Basic depth viewer

import pyorbbec as ob

import cv2

import numpy as np

# Create pipeline

pipeline = ob.Pipeline()

# Configure streams

config = ob.Config()

config.enable_stream(ob.StreamType.DEPTH, 1024, 1024, ob.FPS.FPS_15)

config.enable_stream(ob.StreamType.COLOR, 1920, 1080, ob.FPS.FPS_30)

# Start

pipeline.start(config)

print("Press 'q' to quit")

while True:

frames = pipeline.wait_for_frames(1000)

if frames:

depth = frames.get_depth_frame()

if depth:

# Convert to visualization

depth_data = depth.to_numpy()

color_mapped = cv2.applyColorMap(

cv2.convertScaleAbs(depth_data, alpha=0.03),

cv2.COLORMAP_JET

)

cv2.imshow("Femto Bolt Depth", color_mapped)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

pipeline.stop()

cv2.destroyAllWindows()What This Does

This connects to the camera, grabs depth frames, color-maps them for visualization, and displays in a window. It's the "hello world" of depth cameras—and it works with the Femto Bolt out of the box.

If You Already Use Azure Kinect

Here's why you might actually switch: it's practically the same API.

The Migration Path

# Azure Kinect code

k4a_device_t device;

k4a_device_open(0, &device);

k4a_device_start_cameras(device, &config);

# Femto Bolt - exactly the same!

k4a_device_t device;

k4a_device_open(0, &device);

k4a_device_start_cameras(device, &config);No, really—that's it. The underlying API is identical because the Femto Bolt supports Azure Kinect Sensor SDK. Your existing code just works with the new hardware.

What Changes

- Device opening (same function)

- Configuration (same structures)

- Frame retrieval (same methods)

- Nothing else, honestly

ROS Integration

If you're using ROS, there's good news: Orbbec provides a ROS wrapper.

# Install ROS wrapper

cd ~/catkin_ws/src

git clone https://github.com/orbbec/ros_orbbec_sdk.git

cd ..

catkin_make

# Launch

roslaunch orbbec_camera femto_bolt.launchOnce running, you'll have standard ROS topics:

- /camera/depth/image_rect_raw

- /camera/color/image_raw

- /camera/points

- /camera/imu

Common Issues

Here's what tends to trip people up:

Camera Not Found

- Check USB-C connection

- Try a different port (USB 3.0 required)

- Verify udev rules are installed on Linux

Low FPS

- USB 2.0 won't give you full speed

- Check you're using USB 3.0 port

- High resolution modes need bandwidth

Depth Looks Noisy

- ToF hates direct sunlight

- Move away from windows

- Check lens isn't dirty

What's Next?

From here, you can build on this foundation:

- Body tracking using Azure Kinect SDK

- Object detection with TensorFlow

- Point cloud processing with Open3D

- ROS navigation stack integration

The Orbbec documentation has tutorials for all of these. Start with getting depth viewing working, then expand from there.

Conclusion

The Femto Bolt is refreshingly straightforward to develop with. The Azure Kinect compatibility is the key feature—it means you're not starting from zero.

If you've built anything with depth cameras before, you'll be running samples within an hour. If you're new, the Python example above is a practical starting point.

Start building: Get your Femto Bolt on OpenELAB

openelab.de

openelab.de

openelab.com

openelab.com